In Germany’s deregulated energy market, utilities, grid operators, and metering companies exchange millions of structured messages every month: meter readings, supplier switches, billing data, and master data updates. The messaging format is EDIFACT, governed locally by regulatory and industry bodies such as BNetzA and BDEW. When these bodies publish a new format version, every market participant must migrate by a fixed date. The day after cutover, the old format is no longer accepted. Non-compliant messages are rejected.

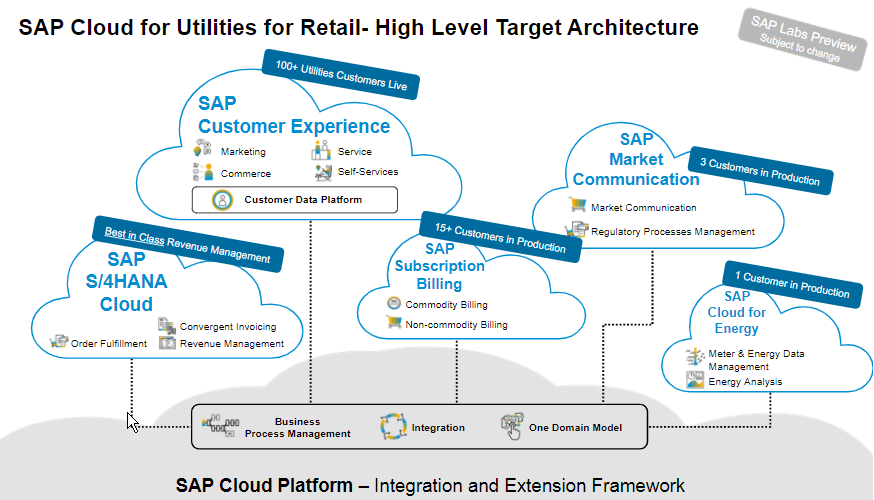

I worked on SAP Market Communication for Utilities, a cloud platform that handles this EDIFACT messaging for enterprise energy clients. My job was to ensure format cutovers happened without production failures across engineering and infrastructure teams in three countries, for a system processing $50M+ in annual transaction volume.

The Core Operating Constraint

EDIFACT format updates are mandatory regulatory migrations with hard deadlines and fixed effective dates. A utility that misses the cutover loses message exchange with grid operators. Supplier switches stall, meter readings bounce, and billing processes stop. For an energy provider serving hundreds of thousands of households, one day of message rejection can cascade into operational chaos.

The format changes themselves ranged from minor field additions to structural changes in message segments. A minor change might add a new qualifier to a segment. A structural change could reorganize how meter reading data is nested, breaking every parser that assumes the old layout. Both categories had the same deadline.

This created a specific product problem: the system had to absorb breaking changes, validate them against the new spec, and ship updates to production before the cutover date. The release plan required production readiness before deadline day.

What Actually Broke

Integration failures in EDIFACT systems follow a pattern. The message format is correct syntactically (it parses), but incorrect semantically (the content violates business rules that the new format version introduces). A message might pass schema validation but fail because a newly required qualifier is missing, or because a segment that was optional became mandatory for a specific market process.

When I joined, the failure pattern was predictable: engineering implemented the format spec, QA tested against the spec document, and production still failed. The gap was between specification and real-world interpretation. Counterparties (grid operators, metering companies) interpreted the same spec differently. One operator expected a conditional segment to always be present. Another rejected messages that included an optional segment their system did not support. The spec defined structure. Participant validation behavior varied by implementation.

I started tracking rejection reasons from production incidents and mapping them to specific counterparties. Within two months, the picture was clear: roughly 40% of production failures came from counterparty-specific validation behavior that was not documented in the official format specification. The remaining 60% were genuine implementation gaps in our own system.

Fixing Upstream Instead of Patching Downstream

The default approach was reactive: a message failed, support investigated, engineering patched, and the fix shipped. In regulated cutovers with hard deadlines, this cycle creates customer-facing failures before fixes land. Each reactive fix arrived after rejected messages, stalled business processes, and customer escalations.

I restructured the approach around three changes.

Counterparty-specific validation profiles. I built a registry of counterparty-specific behaviors: which grid operators enforced stricter rules, which metering companies rejected optional segments, and which market processes had the highest failure rates. Engineering used these profiles to test integrations before cutover.

Staged cutover rehearsals. Before the regulatory deadline, I coordinated dry runs with the top 15 counterparties by message volume. Each rehearsal sent test messages in the new format and captured rejections. This surfaced issues weeks before the deadline instead of on cutover day. Engineering teams in Germany, China, and India worked the rejection backlog in parallel, with each team owning specific message types.

Validation-first deployment. I changed the release sequence. Instead of deploying format changes and validation together, validation rules shipped first. The system ran in shadow mode: it processed messages in the old format while logging what the new validation would have flagged. This gave us a failure preview before production messages were at risk.

Cross-Region Coordination Under a Fixed Deadline

The engineering team spanned three countries. Format specification expertise sat in Germany (close to the regulatory source). Implementation capacity sat in China and India. The timezone spread meant a 14-hour feedback loop if handoffs were not structured.

I owned the cutover plan: which message types shipped first, which counterparty integrations were highest risk, and what rollback looked like if a deployment broke production messaging. The sequencing followed risk, not feature completeness. High-volume message types (supplier switches, meter readings) shipped and stabilized first. Lower-volume processes followed.

The coordination mechanism was simple: a shared tracking sheet with every message type, counterparty, test status, rejection log, and owner. Teams updated it daily as the shared source of truth. When a rejection came in from a German grid operator at 4pm CET, the China team picked it up at 9am CST the next morning with full context already documented.

Results

The format cutover shipped on schedule with zero production outages on cutover day. Integration time for new format versions dropped by 25% compared to the previous regulatory cycle because counterparty validation profiles and staged rehearsals eliminated the post-cutover scramble. System uptime held at 99.9% through the migration period.

The counterparty validation registry became a permanent asset. Subsequent format updates reused the profiles, and engineering teams used them as the first line of testing rather than discovering counterparty behavior in production.

What This Taught Me

Three lessons from SAP that I keep applying.

Enterprise platform reliability is a people-coordination problem wearing a technical hat. The format spec was 200+ pages. The technical implementation was straightforward. Cutovers failed in the gap between specification and counterparty behavior. Closing that gap required relationship-building with counterparty operations teams alongside parsing logic improvements.

Validation is cheaper than correction. Every failure caught before cutover cost minutes of engineering time. Every failure caught after cutover cost hours of investigation, customer communication, and emergency patching. The staged rehearsal approach cost two weeks of coordination. It saved weeks of incident response.

When deadlines and counterparties are fixed, information flow is the primary lever. The regulatory timeline was fixed. Grid operators interpreted the spec independently. The practical lever was information velocity: how fast rejection data reached the right engineer, how clearly the failure was documented, and how quickly fixes were deployed.

SAP gave me the enterprise platform instinct: multi-tenant systems where reliability is non-negotiable, regulatory constraints become product requirements, and integration failures have business consequences far beyond a bad user experience. That instinct shaped how I approached every integration and compliance system I built afterward.

If the move-failure-upstream framing is useful, I wrote about applying the same principle in cross-border payments at Airwallex: Moving Failure Upstream for SWIFT Validation at Airwallex.